Test Driven Development & Behavior Driven Development For Big Data in Scala

Bài đăng này đã không được cập nhật trong 8 năm

Overview

Software testing plays an important role in the life cycle of software development. It is imperative to identify bugs and errors during software development and increase the quality of the product.

Therefore, one must focus on software testing. There are many approaches and Test Driven Development approach is one of them.

TDD is the key practice for extreme programming; it suggests that the code is developed or changed exclusively on the basis of the unit test results.

## Test Driven Development (TDD)

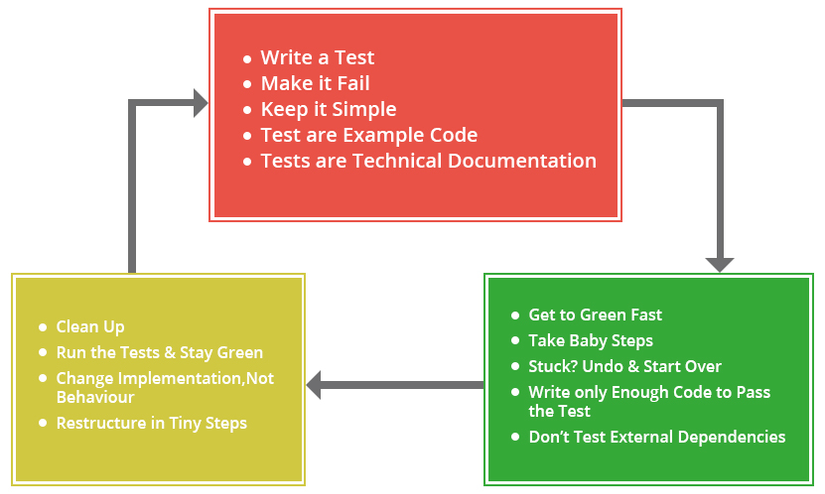

Test-Driven Development (TDD) is a software development process which includes test-first development. It means that the developer first writes a fully automated test case before writing the production code to fulfill that test and refactoring. Steps for the same are given below

- Firstly, add a test.

- Run all the tests and see if any new test fails.

- Update the code to make it pass the new tests.

- Run the test again and if they fail then refactor again and repeat.

Test Driven Development with Legacy Code

In simple words, a code without testing is known as legacy code. A big disadvantage of legacy code is that it’s not easily understandable (for both developments as well as the business team) and that is the only reason why it is difficult to change the code for new features.

Therefore, a code without tests is bad code. It is necessary to write code with tests for best productivity and easy modifications.

Why Test Driven Development (TDD)?

3 Benefits of Test Driven Development

It gives a way to think through our requirements or design before we write our functional code. It is a programming technique that enables us to take small steps during building software. It is more productive as compared to attempting to code in large steps. Let’s take an example, assume that you write some code and then compile it and then test it and there may be chances of failure. In this case, it becomes easy to find and fix those defects if you’ve written two new lines of code as compared to if you’ve written a thousand lines of code.

What is Test Driven Development?

- The most efficient and attractive way to proceed in smaller steps.

Following TDD means

- Fewer Bugs

- Higher quality software

- Focus on single functionality at a given point in time

* Importance of Test Driven Development (TDD)

- Requirements — Drive out requirement issues early(more focus on requirements in depth).

- Rapid Feedback — Many small changes vs One big change

- Values Refactoring — Refactor often to lower impact and risk.

- Design to Test — Testing driving is a good design practice.

- Tests as information — Documenting decisions and assumptions.

Acceptance Test Driven Development (ATDD) Overview

ATDD stands for Acceptance Test Driven Development. This technique is done before starting the development and includes customers, testers and developers into the loop.

These all together decided acceptance criteria and work accordingly to meet the requirements. ATDD helps to ensure that all project members understand what needs to be done and implemented. The failing tests provide us quick feedback that the requirement is not being met.

Benefits of Acceptance Test Driven Development (ATDD)

- As we have ATDD very first, so it helps to reduce defect and bug fixing effort as the project progresses.

- ATDD only focus on ‘What’ and not ‘How’. So, this makes it very easier to meet customer’s requirements.

- ATDD makes developers, testers, and customers to work together, this helps to understand what is required from the system.

Behaviour Driven Development (BDD) Overview

Behaviour Driven Development is similar to Test Driven Development. In other words, Behaviour Driven Development is the extended version of Test Driven Development.

The process is similar to TDD. In this also, the code is first written in BDD and then production code. But, the main difference is that in BDD the test is written in plain descriptive English type grammar as opposed to TDD.

This type of development:-

Explains the behavior of the software/program. User-friendly The major benefit of BDD is that it can easily be understood by a non-technical person also.

Features of Behavior Driven Development

- The major change is in the thought process which is to be shifted from analyzing in tests to analyzing in behavior.

- Ubiquitous language is used, hence it is easy to be explained.

- BDD approach is driven by business value.

- It can be seen as an extension to TDD, it uses natural language which is easy to understand by non-technical stakeholders also.

Behavior Driven Development (BDD) Approach

We believe that the role of testing and test automation TDD(Test Driven Development) is essential to the success of any BDD initiative. Testers have to write tests that validate the behavior of the product or system being generated.

The test results formed are more readable by the non-technical user as well. For Behavior Driven Development to be successful, it becomes crucial to classify and verify only those behaviors that give directly to business outcomes.

Developer in the BDD environment has to identify what program to test and what not to test and to understand why the test failed. Much like Test Driven Development, BDD also recommends that tests should be written first and should describe the functionalities of the product that can be suited to the requirements.

Behavior Driven Development helps greatly when building detailed automated unit tests because it focuses on testing behavior instead of testing implementation. The developer thus has to focus on writing test cases keeping the synopsis rather than code implementation in mind.

By doing this, even when the requirements change, the developer does not have to change the test, input, and output to support it. That makes unit testing automation much faster and more reliable.

Though BDD has its own sets of advantages, it can sometimes fall prey to reductions. Development teams and Tester, therefore, need to accept that while failing a test is a guarantee that the product is not ready to be delivered to the client, passing a test also does not mean that the product is ready for launch.

It will be closed when development, testing, and business teams will give updates and progress report on time. Since the testing efforts are moved more towards automation and cover all business features and use cases, this framework ensures a high defect detection rate due to higher test coverage, faster changes, and timely releases.

Features of Behavior Driven Development (BDD)

It is highly suggested for teams and developers to adopt BDD because of several reasons, some of them are listed below:

- BDD provides a very accurate guidance on how to be organizing communication between all the stakeholders of a project, may it be technical or non-technical.

- BDD enables early testing in the development process, early testing means lesser bugs later.

- By using a language that is understood by all, rather than a programming language, a better visibility of the project is achieved.

- The developers feel more confident about their code and that it won’t break which ensures better predictability.

Differences Between BDD, TDD, and ATDD****

BDD uses Ubiquitous language that can be easily understood by the developers and customers or stakeholders.

TDD is about writing tests to satisfy system requirements as outlined in the BRD, BDD encourages developers to write tests such that they reflect the behavioral expectations from the system of the stakeholders.

All three BDD, TDD and ATDD are closely related to each other. But neither can replace the other one as each one has its own merits and demerits.

BDD is more customer-focused than TDD and ATDD. This allows much easier collaboration with non-techie stakeholders than TDD.

Test Driven Development (TDD) For Big Data

We are working on Big Data based Solutions Development from past few years and Big Data Components usually run on big infrastructures.

For making our solutions high performant and resilient, it is important that we test every aspect of our Big Data Solutions.

Most of the Big Data Solutions comprise of the following three layers –

Data Ingestion Layer

This layer is responsible for bringing data into the system which will be used further to be processed and analyzed. This layer involves data collection processes which connect to the remote data sources and collect the data at a defined schedule.

After this, some preprocessing of data is also handled by this layer, making the data ready for the next layer by moving it to Data Warehouse and real-time streams.

Data Processing Layer

Data Processing Layer is responsible for MetaData Extraction and other value which we can extract from the data we are ingesting from our data ingestion layer.

Data Storage Layer

This layer is responsible for storing our data in optimized and scalable manner. Star/Snowflake schemas, Data Partitioning, Indexing, etc methodologies are used to make our Data Storage layer high performing which further allows low latency queries.

So we will be sharing our experience on how we perform testing of these layers by taking use case of some popular tools/frameworks used for the above layers.

Test Driven Development (TDD) Tools For Big Data

Apache Nifi

Apache Nifi is a widely used framework for Data Ingestion where we can define our workflows to ingest data from a particular source and route it to multiple destinations by defining processors, controller services, etc.

It also provides some processors to do pre-processing work like conversion from one format to the other, adding extra attributes to the incoming data.

While developing our workflows in Apache Nifi, it is very important to test our workflows and controller services used by them.

Apache Nifi Workflows

Nifi workflow comprises of various processors which takes flow files as input and outputs and Nifi Workflow takes care of output to be passed to next processor as input. Flow Files are actual data which has been fetched from external sources like Amazon S3, HDFS, Databases, API, etc.

Controller Services

Controller services are inbuilt services offered by Nifi which are used by processors to connect to external sources like DBCP Connection Pool, Hive Connector services or transformation services like defining AVRO Schema Parser for our external sources. So basically, we configure our controller services separately in Nifi, so that we can use our data sources in an optimized manner.

These are some tools used for Nifi Workflow and custom processor testing

NiFi mock module for Unit Testing Custom Processor

Generate FlowFile for Stress/Load Testing Nifi-mock module for Testing Custom Processor We can also build any custom processors according to our requirements. Nifi offers this module to test the custom processor.

Nifi-mock module aims to test every processor with its functional behavior like some processors in the Apache Nifi workflow will be for transforming data from one format to another format, adding new attributes (Data Enrichment) or removing unnecessary attributes(Data Cleaning) from flow files.

Nifi-mock provides a Test Runner class in which we can write test cases for each processor and set up mock data for them and invoke them using @OnTrigger annotation.

All Mock Data setup by us will be converted into Flow files and create ProcessSessions and ProcessContexts to start the job for Nifi processors and also invoke other lifecycle methods for a processor.

So, TestRunner class executes the test cases in the same way as it runs on staging or production environment and hence ensures us that our processors will work properly in the production environment.

## Stress Testing vs Load Testing using Generate FlowFile

Now, another important aspect of testing is Load Testing. We must validate our Nifi workflow for data coming at a higher velocity and how it behaves at that point.

For this, Nifi provides Generate FlowFile processor which we can use as our Mock data source and we can specify the number of files we want to generate along with their size. So this will keep generating the sample file and we can test the flow with a heavy workload.

Apache Kafka

Apache Kafka is widely used as a Real-time streaming source for Big Data Platform. So it is also a part of Data Ingestion Layer, as Nifi collects the data from our Data Source and then writes it on Kafka Topics and Kafka provides very low latency and high performance read/writes and is used as streaming sources by a lot of consumers like Apache Spark, Apache Flink, Apache Storm, etc.

It is very important to test the connectivity, performance, and concurrency before using it in the production.

Continue Reading:XenonStack/Blog

All rights reserved