GPT-5.4 vs Claude Sonnet 4.6 (2026) The Ultimate AI Model Comparison

OpenAI’s GPT-5.4 (released March 5, 2026) and Anthropic’s Claude Sonnet 4.6 (released Feb 17, 2026) represent two competing approaches to the same market: large-context, agent-capable models optimized for knowledge work, coding, and long, multi-step workflows. Both support million-token context windows (in beta), but they make different tradeoffs in price, token efficiency, and where they concentrate engineering effort.

- GPT-5.4 is positioned as OpenAI’s frontier model for professional work: it unifies reasoning, coding (Codex lineage), and native computer-use/agent abilities, and OpenAI reports an 87.3% mean score on a spreadsheet-modeling benchmark for junior investment banking tasks. It also exposes a “Thinking” mode that surfaces in-flight plans during multi-step reasoning.

- Claude Sonnet 4.6 is Anthropic’s mid-tier model that has received a large upgrade in capability — deliberately targeting Opus-level task performance at Sonnet-class prices. Sonnet 4.6 is reported to hit ~79.6% on SWE-bench (coding), strong tool/agent scores (OSWorld, Terminal variants), and is now the default Claude model for many Anthropic products.

Using GPT-5.4 and Claude 4.6 models simultaneously requires switching between different providers and incurring expensive costs for each. However, CometAPI solves this problem. With just one API key, you can switch between both models simultaneously, paying only for the tokens used, without subscription.

What is GPT-5.4?

GPT-5.4 is OpenAI’s incremental frontier reasoning release aimed at professional knowledge work, rolled out in ChatGPT (as “GPT-5.4 Thinking”), the API, and Codex. OpenAI positions it as the first mainline reasoning model to inherit frontier coding capabilities from their GPT-5.3-Codex lineage, with improved computer-use, tool search, reduced hallucinations, and experimental 1M-token support in Codex. It is available as gpt-5.4 (and gpt-5.4-pro for higher performance) in the API.

Key product features (what changed vs GPT-5.2 / 5.3)

- Upfront plan-of-thinking: GPT-5.4 can provide and present an upfront plan of its reasoning so users can steer mid-response — a workflow improvement for long tasks and multi-step deliverables.

- Tool search & improved tool integration: better discovery of connectors and smoother tool use for agents across tools/files.

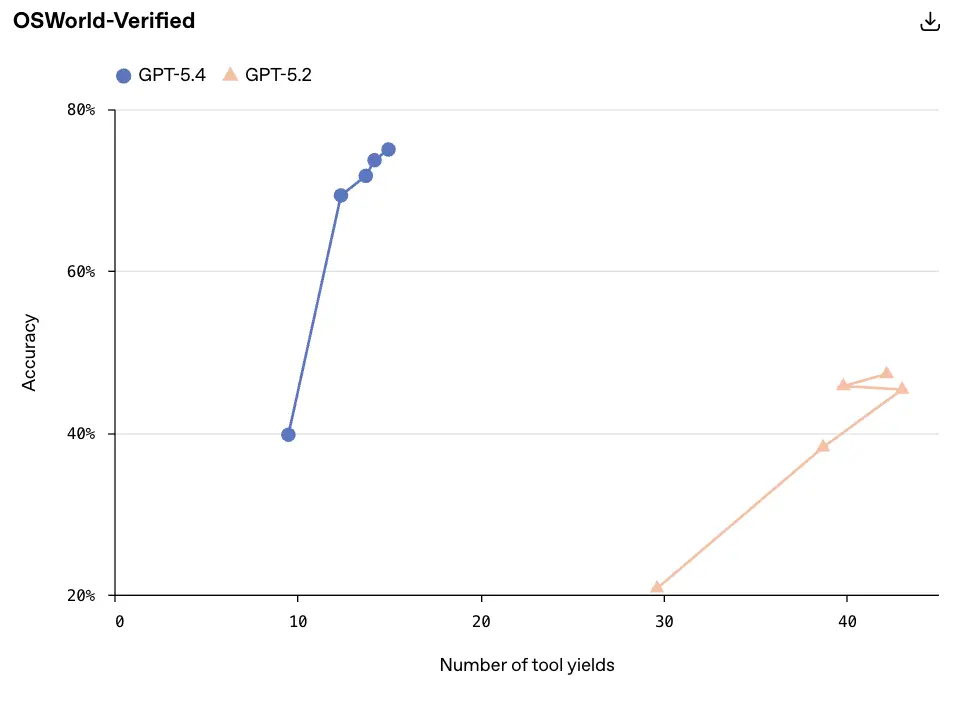

- Token efficiency & speed: OpenAI claims GPT-5.4 is more token-efficient and faster per reasoning effort than GPT-5.2, i.e., fewer tokens to reach the same answer (translating into cost and latency benefits in many workflows).

- Context window experimentation: Codex includes experimental support for a 1M token context window (API flag / experimental config). In ChatGPT, context windows remain at the standard (non-1M) settings at launch; Codex/Dev paths allow broader contexts for now.

Measured strengths and OpenAI’s evidence

OpenAI released a suite of benchmark results for GPT-5.4 showing:

- GDPval (professional tasks): GPT-5.4 achieves 83.0% (wins or ties vs professionally produced baselines) — positioned as a new SoTA in OpenAI’s GDPval evaluations.

- Coding (SWE-Bench Pro): GPT-5.4 posts 57.7% on SWE-Bench Pro (OpenAI’s publicly reported coding benchmark variant). GPT-5.4 also shows substantial gains on internal spreadsheet modelling tasks (mean score 87.3% vs 68.4% for GPT-5.2).

- Tool/Browse performance: OpenAI reports BrowseComp 82.7% for GPT-5.4, showing improved web research and tool-backed retrieval.

- Factuality: OpenAI reports GPT-5.4’s individual claims are 33% less likely to be false and full responses 18% less likely to contain any error vs GPT-5.2 on a de-identified user prompt set. That’s a nontrivial improvement for production documentation and legal/finance workflows.

What is Claude Sonnet 4.6?

Anthropic‘s Claude Sonnet 4.6 is a generational upgrade to the Sonnet tier: Sonnet is the mid-tier “workhorse” model family that balances capability and cost. Sonnet 4.6 aims to deliver Opus-level intelligence on many tasks (Opus is Anthropic’s premium family), with 1M token context support (beta/availability caveats) and large improvements in agentic robustness, document comprehension, and coding. Anthropic made Sonnet 4.6 the default Sonnet model for claude.ai and Claude Cowork without increasing Sonnet pricing.

Key product/features

- Hybrid reasoning + agentic reliability: Sonnet 4.6 improves instruction-following, tool reliability, and adaptive thinking modes used in agentic pipelines. This improves performance on multi-step workflows and orchestrated multi-agent approaches (context compaction + subagents).

- 1M token context (beta): Anthropic supports 1M context for several internal tasks and documents, and reports results both for <1M public API variants and internal >1M evaluations — with context compaction methods to extend effective capability beyond the raw context window.

- Pricing continuity: Sonnet 4.6 kept Sonnet’s previous price points — $3 / 1M input tokens and $15 / 1M output tokens, keeping it attractive for high-volume production use

Measured strengths and Anthropic’s evidence

Anthropic released a comprehensive Sonnet 4.6 system card and blog post documenting internal and third-party evaluations:

- SWE-bench Verified (coding): Sonnet 4.6 79.6% on Anthropic’s reported SWE-bench Verified results — significantly strong on actual developer tasks and GitHub issue resolution tests. (Note: Anthropic’s SWE variants and OpenAI’s SWE-Bench Pro are not necessarily identical in composition — caveat below.)

- BrowseComp: Sonnet 4.6 achieves 74.01% in a single-agent BrowseComp test, and with multi-agent orchestration (via context compaction and subagents) 82.07% — demonstrating that Sonnet’s multi-agent setups can match or exceed single-agent BrowseComp results from competitors in practice. Anthropic reports test-time compute scaling benefits as well.

Quick Comparison: GPT-5.4 vs Claude Sonnet 4.6

The table below compares the core technical specifications of both models.

| Feature | GPT-5.4 | Claude Sonnet 4.6 |

|---|---|---|

| Developer | OpenAI | Anthropic |

| Release | March 2026 | February 2026 |

| Context Window | ~1.05M tokens | Up to ~1M tokens |

| Maximum Output | ~128K tokens | ~128K tokens |

| Modalities | Text, image, computer interaction | Text, image |

| Agent Capability | Native computer use | Tool-based automation |

| Architecture Focus | General AI agent | Safe reasoning AI |

| Best For | automation & agents | coding & reasoning |

| Reasoning style | chain-of-thought planning | adaptive reasoning |

GPT-5.4 focuses on agentic autonomy, while Claude Sonnet 4.6 emphasizes structured reasoning and safe deployment.

Feature and technical comparison

1. Context window (how much the model can “see” at once)

- GPT-5.4: OpenAI public notes and press reporting indicate support for very large context windows (OpenAI has touted up to 1M tokens in certain variants and integration notes), with product tiers that trade context for latency and cost. Early coverage suggests both a 400k context offering in common dev paths and higher beta windows for Pro/Enterprise.

- Claude Sonnet 4.6: Anthropic explicitly advertised beta support for a 1-million-token context in its Sonnet/Opus 4.6 line, positioning long-horizon reasoning as a core design goal. The Sonnet family’s claim centers on sustained chain-of-thought over long documents and agent traces.

Practical effect: When your task is multi-file codebase reasoning, month-long legal contracts, or data lakes of unstructured text, context window size materially improves accuracy, reduces the amount of manual retrieval engineering, and permits conversational workflows that reference long histories. But larger windows come with engineering tradeoffs — longer latencies, higher inference cost, and auditing complexity.

2. Native computer use & agent capabilities

- GPT-5.4: One headline capability is “built-in computer use” — the model can generate code that interacts with the host OS or applications (via Playwright and similar toolchains), issue UI commands from screenshots, and orchestrate multi-step automation flows. OpenAI frames this as enabling autonomous agents that can run software rather than just produce code.

- Claude Sonnet 4.6: Sonnet 4.6 improves agent planning and persistence: longer task-horizon planning, better internal state management, and improved tool selection. Anthropic emphasizes agent reliability (sustaining multi-step workflows), not just raw automation.

Practical effect: For automation-heavy workflows (e.g., “scrape, analyze, write report, submit ticket”), GPT-5.4’s native computer-use orientation may enable faster prototype agents. Sonnet 4.6’s emphasis on deliberative planning may reduce failure modes in longer agentic chains — helpful where auditability and stepwise correctness are paramount.

GPT-5.4 handles screenshots, mouse and keyboard input, and multi-step workflows at a cutting-edge level. This is one of the most important differences discussed in this article for operations, testing, browser automation, and cross-application tasks.

3. Coding & software engineering

- GPT-5.4: Upgrades to Codex and a “/fast mode” to accelerate token throughput and developer feedback loops; positioned as stronger at multi-step developmental tasks and integrating with platforms like GitHub Copilot and VS Code. Early integrations show Copilot enabling GPT-5.4 assistance across mainstream IDEs.

- Claude Sonnet 4.6: Anthropic focuses on compressing multi-day projects into hours, improved debugging, code review, and self-correction. Anthropic also points to better handling of large codebases and fewer hallucinated APIs in unit tests.

Practical effect: Both models significantly accelerate developer workflows. Which to pick comes down to integration (your stack, Copilot vs Anthropic SDK), latency/cost at scale, and which model aligns with your correctness expectations under adversarial or safety-critical constraints.

4. Knowledge work, documents, and office productivity

- GPT-5.4: OpenAI has geared GPT-5.4 for documents, spreadsheets, and presentations; the company rolled out ChatGPT integrations for Excel and Sheets that let the model execute complex financial modeling tasks. The pitch: enable analysts to automate three-statement models, extract structured tables, and generate slides directly from raw data.

- Claude Sonnet 4.6: Anthropic emphasizes long-context summarization and planning for knowledge work — better at sustaining multi-part arguments across long documents and producing structured outputs for legal, research, and policy workflows.

Practical effect: If your firm needs spreadsheet automation and tight integrations with Microsoft/Google productivity suites, OpenAI’s announced add-ins accelerate adoption. If your need is forensic analysis across long legal or research texts, Sonnet’s long-context claims are compelling.

5. Multimodal support

- GPT-5.4: marketed primarily as a text-first model with robust document and spreadsheet handling; image input support is noted in some GPT-5 series variants but GPT-5.4’s emphasis is on text + tool integrations (and developer-facing Codex features for programmatic tool use).

- Claude Sonnet 4.6: Anthropic emphasize text, coding, and agent planning. Sonnet 4.6 is described as highly capable in “computer use” (simulated GUI interactions, automated tool invocation) and long-session planning; multimodal claims are less front-and-center than the model’s reasoning/agent strengths.

Practical takeaway: For workflows that require mixed media (images + text), buyers should validate modality support in the specific API tier they plan to use. For text-heavy, multi-file, and spreadsheet workflows both models prioritize encodings and compaction strategies that make long context tractable.

Side-by-side: capability and benchmark comparison

Below are concise, directly comparable datapoints drawn from the vendors’ published pages and system cards. I include the primary caveats inline.

Browse / web-research (BrowseComp)

- GPT-5.4 (OpenAI) — 82.7% BrowseComp. (OpenAI: BrowseComp 82.7% in the GPT-5.4 release materials.)

- Claude Sonnet 4.6 (Anthropic) — 74.01% single-agent BrowseComp; 82.07% multi-agent BrowseComp when run with an orchestrator + subagents / context compaction (Anthropic reports both values and explains the multi-agent advantage). Anthropic also reports test-time compute scaling (e.g., 64.69% @1M sampled tokens rising toward 74% at higher total sampled tokens).

Coding and developer work (SWE/Terminal)

SWE-style tests: Anthropic reports Sonnet 4.6 at 79.6% on SWE-Bench Verified (their verified, human-validated coding subset). OpenAI reports GPT-5.4 57.7% on SWE-Bench Pro (OpenAI’s public pro variant). These results show Sonnet very strong on Anthropic’s chosen SWE variant. Important caveat: the SWE datasets and evaluation protocols differ by vendor; direct numeric comparison should be treated cautiously.

Professional / knowledge work (GDPval / GDPval-AA / OfficeQA)

- OpenAI (GPT-5.4) — GDPval 83.0% (OpenAI’s GDPval metric across 44 occupations; OpenAI frames this as matching or exceeding industry professionals in 83% of pairwise comparisons). OpenAI also reports very strong spreadsheet/presentation gains (e.g., internal investment banking task mean score 87.3% vs 68.4% for GPT-5.2).

- Anthropic (Sonnet 4.6) — Anthropic reports strong performance on internal finance/OfficeQA and Real-World Finance tasks; Sonnet matches Opus 4.6 on OfficeQA and posts high task-completion rates in internal finance evaluations; Anthropic reports Sonnet 4.6 89.9% on GPQA Diamond and other high marks on domain tests. These are powerful signals that Sonnet is highly capable on enterprise document tasks.

Data-backed comparison table

| Dimension | GPT-5.4 (OpenAI) | Claude Sonnet 4.6 (Anthropic) |

|---|---|---|

| BrowseComp (vendor reported) | 82.7% (base) / 89.3% (Pro, some settings). | 74.01% (single) → 82.07% (multi-agent). |

| Coding (vendor VAR) | SWE-Bench Pro ~57.7% (OpenAI reported). | SWE-bench Verified ~79.6% (Anthropic reported). |

| Pricing (input/output per 1M tokens) | ~$2.50 / $15 (base list examples). | $3 / $15; strong caching & batch savings. |

| 1M token context | Experimental via Codex/dev; ChatGPT rollout varies. | 1M context beta + compaction strategies. |

| Safety posture | Factuality improvement (↓33% false claims vs GPT-5.2). Balanced refusal/completion. | Highly conservative refusals on many safety slices (system card numbers). |

Pricing Comparison

Pricing is one of the most important factors for organizations deploying AI at scale.

API Pricing

| Pricing | GPT-5.4 | Claude Opus 4.6 |

|---|---|---|

| Input tokens | $2.50 / 1M | $15 / 1M |

| Output tokens | $3/ 1M | $15 / 1M |

GPT-5.4 is slightly cheaper on input tokens.

This difference becomes significant for high-volume workloads such as:

- enterprise automation

- data analysis pipelines

- large-scale code generation

Subscription Pricing

Both platforms offer similar subscription tiers.

| Plan | ChatGPT | Claude |

|---|---|---|

| Standard | $20/month | $20/month |

| Premium | $200/month | $200/month |

At the subscription level, pricing parity means the real cost difference appears primarily in API usage.

Looking for cost-effectiveness: Access GPT-5.4 and Opus 4.6 via CometAPI.

If your workflow requires multiple GPT-5.4 and Claude 4.6 (each with its own characteristics), paying different vendors separately can be costly and cumbersome. This is where CometAPI's multi-modal aggregation platform comes in strategically.

CometAPI's philosophy is simple: instead of maintaining multiple official accounts to compare outputs, users can access leading models on a single platform, quickly switch between them, and evaluate workflows side-by-side. It also offers a 20% API discount and pay-as-you-go pricing without a subscription.

Strengths and Weaknesses

Where GPT-5.4 Wins

Advantages:

- superior automation capabilities

- better terminal-based coding

- lower API cost

- stronger performance in knowledge-work tasks

- broader general intelligence

Best for:

- startups

- automation systems

- developer tooling

- research assistants

Where Claude Opus 4.6 Wins

Advantages:

- stronger reasoning depth

- best-in-class coding benchmark scores

- better large-context retrieval

- multi-agent collaboration tools

Best for:

- enterprise software teams

- infrastructure engineering

- research environments

The Future: Multi-Model Workflows

An important industry trend is emerging.

Rather than choosing a single AI model, many teams now use multiple models simultaneously.

Example workflow:

- GPT-5.4 → automation and data analysis

- Claude Opus 4.6 → deep coding and architecture

- other models → specialized tasks

This model-routing architecture allows teams to maximize strengths while minimizing weaknesses.

Final Verdict

Both GPT-5.4 and Claude Sonnet 4.6 are among the most powerful AI models available in 2026. GPT-5.4 excels in agentic automation and integrated workflows, while Claude Sonnet 4.6 offers efficient, scalable reasoning capabilities with competitive pricing.

All rights reserved