GPT-5.4 in Openclaw: Benfits, Configuration & Best Practice

OpenClaw’s recent release adds first-class, forward-compatible support for OpenAI’s GPT-5.4 and introduces a “memory hot-swappable” architecture that lets OpenClaw agents change which model and which memory store are active at runtime with minimal disruption. This unlocks large-context workflows (GPT-5.4’s expanded context windows), on-the-fly model specialization, and cost/latency optimizations for production agents. The upgrade is available in OpenClaw’s releases and accompanying docs; the examples below show practical configuration, code snippets, benchmark context, and recommended best practices.

What OpenClaw’s update actually shipped (quick summary)

On March 9, 2026, the open-source agent framework OpenAI-adjacent project OpenClaw shipped a major core release (2026.3.7) that adds first-class support for GPT-5.4 and a novel memory hot-swappable mechanism in its context engine. This release converts a widely used experimental agent framework into what the maintainers describe as an “Agent Operating System” — aiming to make production-grade agent workflows and model switching seamless for developers and teams.

3 practical items that matter for agent builders:

- First-class GPT-5.4 support — model aliases and provider mappings that let agents select GPT-5.4 as the primary execution model (including channel overrides and per-agent model pins).

- Context Engine & distributed channel binding — improvements in how OpenClaw assembles long context windows from memory, tool outputs, and channel history so high-capacity models get well-structured inputs.

- Memory hot-swappable architecture — clearer memory plugin surfaces and workflows so you can replace memory backends or upgrade agents without losing “identity” or corrupting persisted state (the memory itself remains the single source of truth). OpenClaw’s memory design (plain Markdown files, indexed search, pluginized retrieval) is part of what enables safe hot-swapping.

GPT-5.4 — What GPT-5.4 is and benchmarks breakthrough

GPT-5.4 is the latest OpenAI frontier model release focused heavily on professional productivity (spreadsheets, document and presentation editing, multi-step reasoning and tool driving). According to OpenAI and independent press coverage, the release emphasizes:

- Expanded context: GPT-5.4 introduces the next tier of context windows, with experimental 1M-token and improved long-context handling available via Codex/Codex-compatible endpoints—configuration knobs like

model_context_windowandmodel_auto_compact_token_limitare exposed for developers. This lets you keep far larger conversation state, documents, and code bases in active context. - Higher spreadsheet and reasoning accuracy — OpenAI reports a major improvement on spreadsheet modeling tasks (mean scores ~87% vs ~68% for GPT-5.2 on their banking/analyst spreadsheet benchmark).

- Accuracy and factuality improvements: Early reviews and QA show ~33% reduction in hallucinations and lower error-prone outputs relative to GPT-5.2, with notable gains in document drafting and spreadsheet work. Reviewers also cited an ~18% decrease in error-prone responses on certain productivity tasks.

- Integrated computer-use and Codex lineage improvements — GPT-5.4 includes capabilities inherited from the Codex lineage that improve code generation, interactive debugging, and operational tool driving (mouse/keyboard/screenshot automation in some demonstrations). This makes it better at the write-run-inspect-patch cycle typical of agent loops.

Benchmarks & comparative context (what numbers mean)

- Spreadsheet modelling: OpenAI’s internal spreadsheet benchmark: ~87.3% mean score for GPT-5.4 vs ~68.4% for GPT-5.2. This is the headline the vendor uses to show task-specific gains.

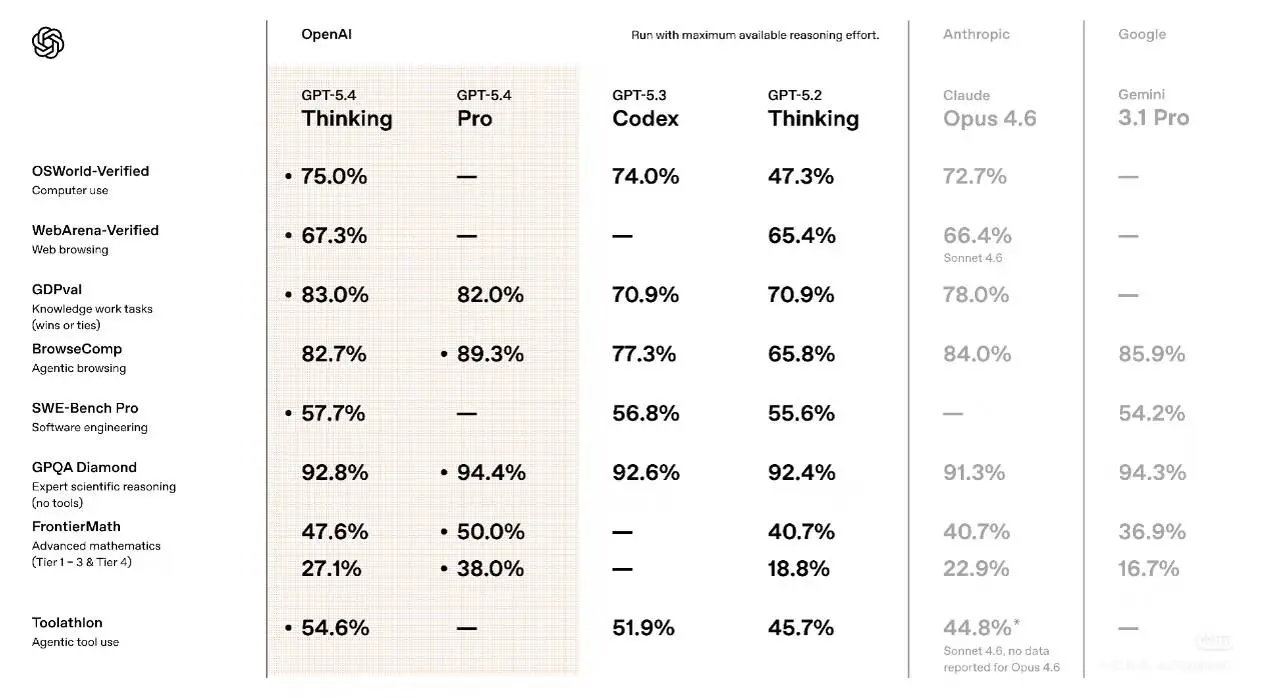

- Computer interaction (OSWorld / agent-style tests): independent testers and community runs show GPT-5.4 improves on agent interaction tasks that involve desktop or simulated UI manipulation, sometimes edging out recent Anthropic model variants in small margins on these task suites (differences are meaningful for agents, but not necessarily decisive in every workload).

Interpretation: GPT-5.4 is not a “magic bullet” that wins everything. It has clear strengths in integrated tool use, code execution patterns, and spreadsheet reasoning — which are exactly the workloads OpenClaw agents often run. For agent builders, the combination of improved executor reliability (Codex lineage) + planner competence + better long-context handling is highly relevant.

OpenClaw supports GPT-5.4: what changed and why it matters

OpenClaw’s release (see the project releases page) updates model resolvers and runtime to be forward-compatible with GPT-5.4’s expanded context and token limits and adds the “memory hot-swappable” capability so agents can switch memory backends or models at runtime. This is done in three concrete ways: 1) model metadata and resolver updates to accept the larger context and token limits; 2) agent runtime changes to orchestrate graceful model swaps and cache warm-up; 3) a memory API allowing multiple memory channels and hot switch triggers.

Version 2026.3.7’s support for GPT-5.4 plus a memory hot-swappable design provides two practical, complementary advantages:

- Straightforward model upgrade path. OpenClaw can now present GPT-5.4 as a selectable "runtime" for agents, letting you switch from older GPT-5.x models or alternative vendors without reworking your agent logic. The OpenClaw update explicitly declares stable GPT-5.4 integration in the core.

- Memory Hot-Swapping. Instead of persisting a single linear memory snapshot, OpenClaw’s Context Engine allows memory partitions to be detached, swapped or migrated at runtime — e.g., swap in a high-recall vector DB shard for debugging or swap to a GDPR-sanitized memory variant for external audits — without stopping the agent. That lowers disruption risk in production and enables use-case-specific memory configurations (debugging vs privacy vs performance).

practical performance breakthroughs & advantages

OpenClaw’s integration focuses on three practical areas where GPT-5.4 shines:

- Tool orchestration fidelity. GPT-5.4’s improved internal tool-search and reasoning reduces tool-call churn (fewer redundant tool calls and fewer retries). That translates to lower API calls and faster completion for complex flows. Early reports indicate token and tool-call efficiency improvements compared to older GPT-5.x models.

- Longer, richer context handling. OpenClaw agents can now keep much larger active contexts (including memory shards swapped in), allowing them to manage long conversations, multi-file projects, and iterative debugging without losing state.

- Better deterministic code output. For workflows that auto-generate code (CI hooks, function stubs, infrastructure templates), GPT-5.4 tends to produce more consistent and runnable outputs, reducing human review overhead. Independent tests show notable improvements in code quality metrics vs prior GPT-5 models.

- Memory continuity — “memory hot-swappable” lets you replace or augment memory stores (local cache, vector DB, LLM memory) without losing agent state or context, enabling A/B testing, rolling upgrades, and failover.

In the OOLONG benchmark test, the new version of OpenClaw, in conjunction with the lossless-claw plugin, achieved a high score of 74.8, leaving Claude Code (70.3 points) far behind. In particular, OpenClaw demonstrated stability and accuracy as the context length increased, prompting the engineers conducting the on-site testing to exclaim that "it's too conservative to say it runs well."

How to configure and use GPT-5.4 in OpenClaw (step-by-step)

A Simple OpenClaw Workflow Using GPT 5.4:

- A typical configuration is shown below:

- Users send messages via platforms such as Discord or Telegram.

- OpenClaw receives the messages through its gateway server.

- The gateway forwards the prompts to GPT 5.4 via the AI API provider.

- GPT 5.4 generates a response or triggers a tool action.

- OpenClaw sends the final result back to the user.

Below are pragmatic, copy-pasteable configuration examples and workflows to run GPT-5.4 in OpenClaw safely and reproducibly. These are intentionally conservative: enable the model first in a test agent and instrument everything for metrics and errors.

Prerequisites- OpenClaw upgraded to the release that includes GPT-5.4 mappings (the release notes referenced above).

- Valid OpenAI API key with access to GPT-5.4 (I choose CometAPI endpoint with cheaper price).

1) Model selection & resolver configuration (Json/ YAML / CLI)

Put this in ~/.openclaw/openclaw.json (or merge into your existing config). Adjust provider name and token reference as required by your environment.

</>JSON

{

"agents": {

"defaults": {

"model": {

"primary": "openai/gpt-5.4",

"fallbacks": ["openai/gpt-5.3", "claude/opus-4.6"]

},

"workspace": "~/.openclaw/workspace"

}

},

"models": {

"providers": {

"openai": {

"api_key_env": "ComtAPI_API_KEY",

"base_url": "https://api.cometapi.com/v1"

}

}

},

"plugins": {

"slots": {

"memory": "memory-core"

}

},

"channels": {

"modelByChannel": {

"support-team": "gpt-5.4",

"low-cost-batch": "gpt-5.3"

}

}

}

OpenClaw uses a model resolver to map logical model names (e.g., openai/gpt-5.4) to endpoints and runtime config. Add or update your resolver file (example models.yml):

</> YAML

# models.yml - OpenClaw model resolvers

models:

openai/gpt-5.4:

provider: openai

model_id: gpt-5.4

context_window: 1050000 # forward-compatible 1,050,000 tokens

max_output_tokens: 128000

api_base: "https://api.openai.com/v1"

timeout_seconds: 120

rate_limit_factor: 1.0

Or set it at runtime via CLI:

</> Bash

# Switch OpenClaw to use GPT-5.4 for the current agent session

openclaw model set openai/gpt-5.4

Note: The

context_windowandmax

Notes

agents.defaults.model.primarypicks the default model. Usechannels.modelByChannelfor per-channel overrides so you can route high-impact channels to GPT-5.4 and less demanding channels to cheaper models. See OpenClaw model selection docs for ordering semantics.- Please refer to the CometAPI model page for specific model names. If you wish to use OpenAI, replace the URL and API key with OpenAI's.

- The

context_windowandmax_output_tokenskeys reflect the forward-compatibility changes in OpenClaw’s resolver so the agent will not attempt to use stale Codex limits.

2) How to enable and test “memory hot-swapping”

OpenClaw’s memory subsystem is file-based (Markdown files) plus indexers/search plugins so you can safely swap backend plugins (e.g., SQLite vector, Milvus, or external memory services) without losing the raw memory files.

A common pattern:

- Standardize memory location: use a git-backed workspace:

~/.openclaw/workspace/whereMEMORY.mdandmemory/YYYY-MM-DD.mdare authoritative. - Install & configure a memory plugin (example: sqlite-vec) and point

plugins.slots.memoryat it in the config. - Test migration: add a new plugin, run a shadow indexing job, compare retrieval results, then switch the

plugins.slots.memoryalias to the new plugin when satisifed.

Example plugin alias swap (bash pseudo-commands):

# install new plugin (example package)pip install openclaw-memory-sqlite-vec# update config safely (backup first)cp ~/.openclaw/openclaw.json ~/.openclaw/openclaw.json.bak# then edit JSON: plugins.slots.memory = "memory-sqlite-vec"# reload gateway (safe restart)systemctl restart openclaw || openclaw gateway restart# run a retrieval consistency check using the test harnessopenclaw test memory_consistency --samples 100

Why this is “hot-swappable”: the memory files stay the source of truth. Plugins implement indexing and retrieval layers; swapping them reindexes but does not change the underlying .md files. This allows model swaps without catastrophic identity drift — the agent still reads the same MEMORIES.

3) Example: pin an individual agent to GPT-5.4 (per-agent override)

You can override models per agent; add an agent entry like:

{

"agents": {

"my-analyst-agent": {

"model": {

"primary": "gpt-5.4"

},

"workspace": "~/.openclaw/workspace/analyst"

}

}

}

If the community release or your particular OpenClaw version requires CLI, you can also set a per-session model at runtime:

# Start a session and switch model for the live session

openclaw session start my-analyst-agent

openclaw session command /model gpt-5.4

Operational tip: pinning ensures deterministic behavior for that agent while you run A/B tests on others.

If you wish to use OpenAI, replace the URL and API key with OpenAI's.

4) Using Codex 1M context options (API knobs)

If your OpenClaw deployment accesses OpenAI Codex endpoints directly, pass context options:

{ "model": "openai-codex/gpt-5.4", "input": "...", "model_context_window": 1050000, "model_auto_compact_token_limit": 200000}

Requests that exceed standard context windows may count at different usage rates (OpenAI docs note double accounting for requests beyond standard windows in Codex preview).

Best practices: maximizing GPT-5.4’s strengths in OpenClaw

Cost, latency & model mix

- Hybrid model strategy: Use a smaller, cheaper model for short queries and stream processing; hot-swap to GPT-5.4 for heavyweight analysis, summarization, code generation requiring long context. This reduces overall token cost while preserving quality. (Implement via triggers in the memory config above.)

- Token compaction & retrieval augmentation: Use retrieval-augmented pipelines to limit tokens sent to the model — store long documents in a vector DB, retrieve relevant segments, and include only the most relevant chunks plus a compact plan. GPT-5.4’s tool search helps here by locating helpful tools or documents automatically.

- Warm-up & cold start: After a model swap, warm up the model with a short context priming run to avoid first-request latency spikes. Precompile any prompt templates and rehydrate critical memory channels. OpenClaw’s rolling strategy (see config) supports pre-warming.

Reliability & safety

- Graceful fallback: Implement timeouts and fallback plans (e.g., degrade to a cached answer from a previous session) to handle API rate limits or quota errors.

- Safety layers: Maintain policy filters and a verification step when outputs affect decisions. GPT-5.4 reduces hallucinations statistically, but verification is still important for high-stakes tasks.

Eval & monitoring

- Reproduce your benchmarks: Run head-to-head tests for your workloads (code completion, multi-file refactor, spreadsheet analysis) using a standard rubric. Public reports indicate strengths in spreadsheet and productivity tasks — validate with your data.

- Telemetry: Monitor token consumption, model latency, memory swap frequency, and answer quality (human ratings/automated tests). Use the telemetry to refine swap thresholds.

Example : Code review agent that hot-swaps

Goal: Run a routine lint + unit test summary on push (cheap model) and escalate to GPT-5.4 for multi-file refactor suggestions when tests fail or diffs exceed 10 files.

Flow (high level):

- Pre-commit trigger runs

local/fast-small-coderto generate lint summary. - If

test_failures > 0ordiff_files > 10, triggerhot_swaptoopenai/gpt-5.4. Promotelongterm_vectorcontaining repo history. - Run GPT-5.4 prompt that has entire failing stack traces + relevant code files pulled into context. Generate refactor patch and unit test changes.

- Human reviewer rates output; feedback updates memory.

Prompt skeleton (sent to GPT-5.4 after retrieval & compaction):

You are a senior reviewer. The repository has 12 changed files. Tests failed with stack traces below. Relevant files (retrieved): <file snippets>. Provide:1) concise summary of root cause (3 bullets),2) a minimal patch (diff) to fix,3) test changes needed,4) risk assessment and roll-back plan.

This use case highlights why large context + memory hot-swap is valuable: you can bring the full failing trace and multiple files into the model at once. Implement swap triggers conservatively to control costs.

Finally: who should adopt GPT-5.4 in OpenClaw (and when)

- Adopt now if your agents perform multi-step code/tool tasks, heavy spreadsheet automation, or complex document editing where iterative write-run-inspect cycles dominate developer time. The productivity and reliability gains are most visible here.

- Adopt carefully if you operate in cost-sensitive, high-volume chat channels where simpler reasoning suffices; use routing to preserve cost efficiency.

- Don’t assume one-model dominance: benchmark on your data. GPT-5.4 is a strong contender for agent workloads, but model choice must be evidence-driven.

All rights reserved